Building Zuka: A Voice That Can't Be Killed

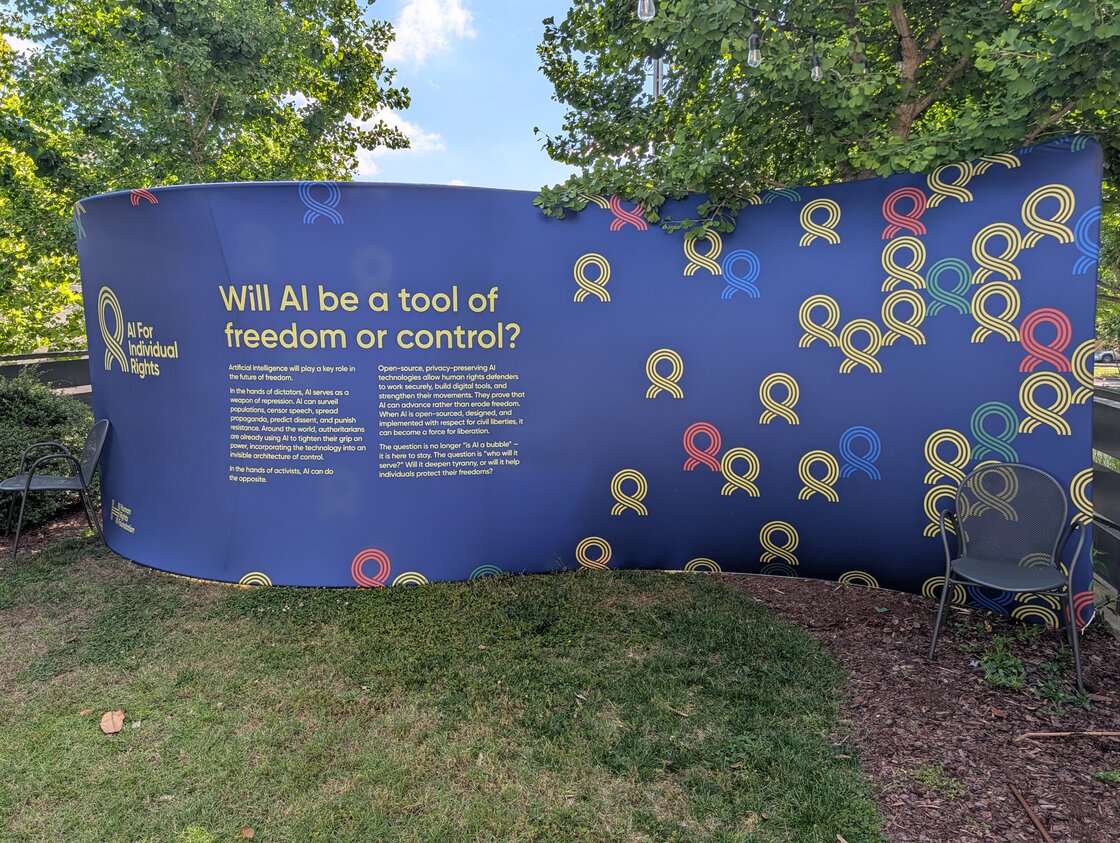

We built an AI persona platform for activists in 48 hours at HRF's second AI Hack for Freedom. It protects the humans behind the voice — and makes the voice impossible to silence.

We built an AI persona platform for activists in 48 hours. Three days before the hackathon, a Rwandan YouTuber with 60,000 followers died in custody on the day he was reportedly supposed to be released. By Sunday, we had a working prototype that hides the human behind the voice — so the regime has no one to find, and no way to shut the voice up.

A few months ago in January, the Soapbox team went to Bitcoin Park Austin for the AI Hack for Freedom hackathon, sponsored by the Human Rights Foundation. We built Agora with Leopoldo López — a censorship-resistant platform for Venezuelan activists that's now in production.

This past weekend, HRF ran the second edition at Bitcoin Park's other location in Nashville. I came back for the same reason Soapbox went the first time: pair developers with real activists facing real problems. No hypothetical users. No imagined use cases. Just people whose lives depend on the tools we build.

My team won second place at the Nashville hackathon, just like we did in Austin. $15,000 in prizes. But that's not what made the weekend.

When the Problem Walks Into the Room

I was paired with Anaïse Kanimba, a Rwandan-American human rights activist. Her father is Paul Rusesabagina — the man depicted in Hotel Rwanda, who was kidnapped and detained by the Kagame regime. She's spent her adult life advocating for political prisoners. She knows what authoritarian states do to people who speak.

Anaïse didn't come with a wishlist of features. She came with a fresh, brutal data point. Three days before the hackathon, a Rwandan YouTuber named Aimable Karasira died in custody at a hospital in Kigali. He ran a YouTube channel called Ukuri Mbona — "The Truth As I See It" — with more than 60,000 followers. He had been arrested in 2021 for criticizing the Rwandan Patriotic Front and the Kagame government, sentenced to five years for "inciting division," and reportedly tortured while detained. He died on the day he was supposed to be released. The Rwandan Correctional Service called it a medication overdose. Exiled Rwandan human rights activists called it suspicious and demanded an independent investigation.

That was the news Anaïse showed up with.

Her ask was as simple as it gets:

"You can't speak in Rwanda without being silenced or killed. I want an AI influencer that can't be killed or shut down."

— Anaïse Kanimba

That's the problem. Now figure out the product.

What "Can't Be Silenced" Actually Means

Authoritarian regimes have a playbook for shutting voices down. We listed it on a whiteboard:

Physical violence.

Kill the human. The voice goes silent.

Platform takedowns.

Pressure YouTube, X, or Meta to remove the account.

Domain seizures.

Block the website at the ISP level.

Bank account freezes.

Choke off the money keeping the operation running.

Identity exposure.

Once the regime knows who you are, they can find your family.

Every centralized tool fails on at least one of these. Most fail on all of them.

For an AI persona to actually be ungovernable by a state, every single one of these has to break differently than it does on traditional infrastructure. That's the design constraint.

48 Hours to Build Something Real

I was the only Soapbox attendee at the event this time around, and the only Soapbox member on the team. The hackathon's pairing model put me on a four-person team with three people I'd never worked with before:

- Anaïse — captain, product voice, demo lead, persona sign-off

- Me — frontend, Nostr integration, PWA, Android via Capacitor

- Jim — PPQ AI integration, AI video creation, video studio implementation

- Topher — Breez Spark wallet, donation surfacing, AI assistant wizard

None of this would have shipped in 48 hours without MKStack. It's Soapbox's open-source starter kit for Nostr apps — React, Vite, Tailwind, shadcn/ui, and Nostrify already wired together, plus a library of pre-built components for the things every Nostr app needs: login, profiles, posts, zaps, relays. The tech stack and the building blocks were locked in before we landed in Nashville.

By Sunday morning we had a working app.

Zuka is a PWA — and an Android app — for creating and operating AI personas. The name is Kinyarwanda for "rebirth." Anaïse picked it. The metaphor is exactly right: even with a fresh face, an old voice can come back stronger.

Here's what we shipped:

- Persona-owned Nostr identity. Every persona is its own Nostr keypair. The human running it is a separate keypair that never posts publicly. To outside observers, there's no link between them.

- Persona-owned Lightning wallet. Each persona gets its own self-custodial Spark wallet via the Breez SDK. It accepts zaps, exposes a Lightning Address, and pays for its own AI inference.

- Self-sustaining AI. AI inference runs through PPQ. The persona's wallet auto-tops-up its PPQ credits via NWC. Donations sustain the voice — the persona literally pays for its own thoughts.

- Encrypted backup on Nostr. Persona keys + wallet seed + assets are encrypted (NIP-44) to the operator's pubkey and published as a kind 30078 event. No identifying tags. Indistinguishable from any other Nostr application-data event.

- Video-first composer. Idea + sources + style → AI-generated video. Posted to Nostr (kind 1 with NIP-92), optionally cross-posted to X / Facebook / Instagram via webhook.

- Resurrection. Lose your phone? Log into the operator account on a new device, fetch the encrypted backups from relays, decrypt locally, every persona is back. Funded. Publishable.

The Demo Moment

We pitched to the HRF judges with a six-minute live demo.

Built a persona on stage. Filled in the wizard, generated a face with AI, minted a Nostr keypair, created a Lightning wallet. Then we composed a thought, watched the AI style it in the persona's voice, and published it to Nostr.

Our plan was to close with a kill-and-resurrect beat — close the laptop, log the persona back in on a second device, prove the voice doesn't depend on us. We ran out of time. So instead, we played a video we'd generated earlier in the weekend: a short clip of the persona we'd built, talking in his own voice, on his own face, poking fun at the regime's tactics. The audience laughed.

That worked too. Maybe better. The point of the demo wasn't really "the keys are portable" — it was "this voice belongs to itself now, and it has something to say." A laugh at a dictator's expense, delivered by a face that doesn't exist, paid for by money that can't be silenced.

We took second place. $15,000 in prizes.

Why Nostr and Bitcoin (Again)

Earlier this year, after Austin, I wrote about why we used Nostr and Bitcoin to build Agora. The same logic applies here, sharpened by a different threat model.

Nostr eliminates single points of failure for identity and publishing. There is no company to pressure, no server to seize, no database to compromise. The persona's identity is a cryptographic key replicated across hundreds of relays worldwide. To kill the voice, you'd have to take down every relay — and the moment one comes back online, the persona's posts can be rebroadcasted there, resurrected, reborn.

Bitcoin solves the money problem the same way it does for human activists. A persona that depends on a credit card or a bank account is a persona that can be cut off in a single phone call to a payment processor. A persona with its own self-custodial Lightning wallet is one that gets paid in sats from anywhere, by anyone, without permission.

But Zuka adds a new dimension: the AI inference is paid for by the persona itself. We're not running an OpenAI key on a server somewhere that can be revoked. Each persona has its own PPQ credits, funded directly by donations from supporters via NWC auto-topup. As long as someone in the world is willing to spend sats to keep the voice alive, the voice stays alive.

That's the architecture activists asked for. That's what we shipped. An AI influencer that cannot be silenced.

What's Next

We're transitioning from hackathon V1 to a more ambitious V2.

V2 is the agent-driven character creator. Right now the persona wizard is form-based — you fill in name, bio, system prompt, upload a face. V2 replaces it with a real interview: the embedded AI asks you about your values, your region, what this voice stands for, and proposes a persona based on your answers. Plus video generation with persona likeness and voice consistency, multi-language interviews (starting with Kinyarwanda + English), and multi-operator-per-device for activists who need to compartmentalize persona groups.

We're also pursuing app store releases. Zapstore was first — censorship-resistant, no gatekeepers, decentralized. Then Google Play and the Apple App Store via the AOS collective for wider reach. Websites can be blocked. Apps distributed through Zapstore can't.

The Point

Soapbox keeps showing up to these hackathons because the problems are real. In January, the team built Agora with Leopoldo, who was asking how Venezuelan activists find each other when X gets banned and bank accounts are frozen. This time I was the one in the room, paired with Anaïse, who was asking how a voice survives the murder of the person speaking.

The answers aren't in product spec documents. They're in the protocols. Nostr lets a voice exist without a platform. Bitcoin lets it earn without a bank.

Stack those together and you get something new: a voice the regime can't trace back to a person to target. A persona that pays for its own thoughts. An influencer that can't be killed or shut down, because there's no one specific to find, and nothing specific to seize.

That's Zuka. It's named rebirth for a reason.

We built it in a weekend. We're just getting started.

Try Zuka, follow development on GitHub, or build your own freedom tech with MKStack.

Soapbox is funded by grants and donations, not ads or data sales.

Everything we build is open source and belongs to the community. Help us keep it that way.